Friday Facts 19: Marlin for Robot Arms

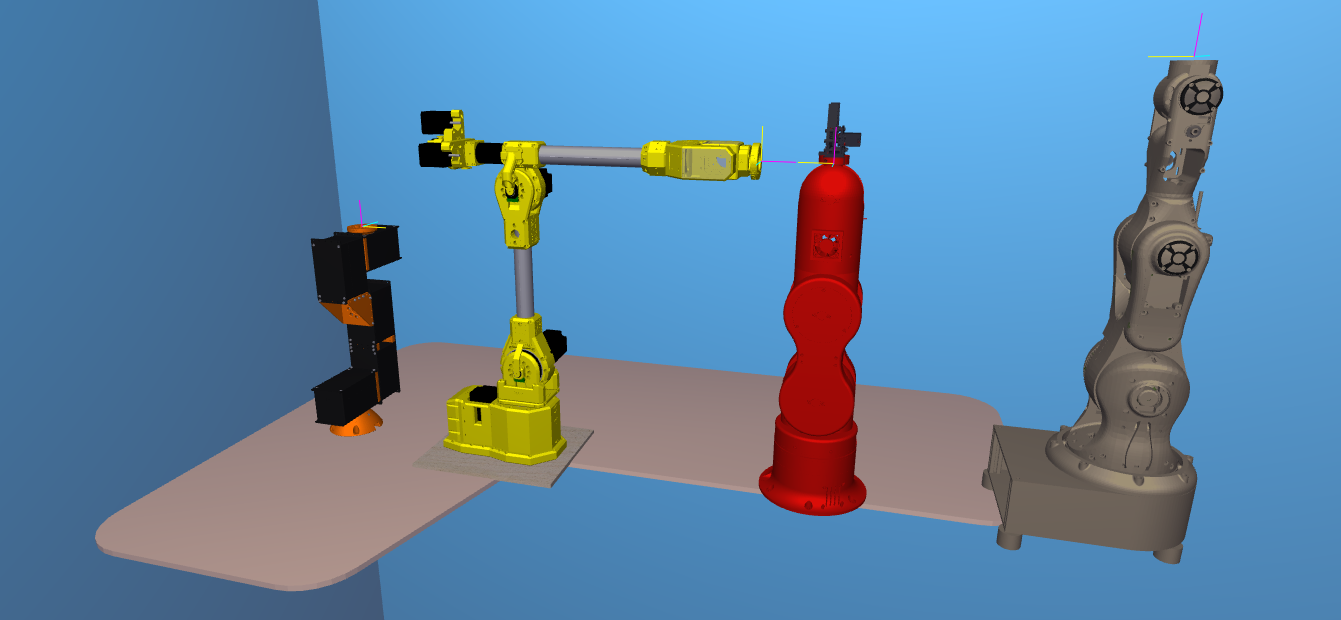

Today I’m going to show you how to set up Marlin firmware – the code in the robot brain – for your board so it thinks it is a robot arm and I will be using the Sixi 3 as my example. When we’re done we’ll have a 6 axis machine ready to receive gcode from apps like Robot Overlord.

Building a custom robot arm is easier if one uses common hardware. Thanks to the popularity of 3D printers it is now super easy to get stepper motors, limit switches, and MCUs that drive five, six, or even more motors. Marlin takes away all the headache and lets anyone talk to a robot with gcode, the standard language of CNC machines like 3D printers, mills, and lathes.

The major steps are:

- Fork Marlin

- Customize it

- Flash your board

- Test it

Fork Marlin

To “fork” is to make a special copy. it’s special because it includes a feature to update your copy with changes to the original. When the Marlin developers improve something, you can press a button and get all their updates.

The code for Marlin is available at https://github.com/MarlinFirmware/. I have a fork for robot arm Sixi 3 already started. You can get that fork as well right here: https://github.com/MarginallyClever/Marlin/

Make sure that (1) the branch is set to sixi3, then (2) click the code button and then (3) Open with Github Desktop or Download Zip. If it’s a ZIP you’ll have to unpack it somewhere like Documents/Github/Marlin.

Customize Marlin

Here’s a list of lines in Configuration.h that I’ve changed. The bold parts are unchanged so you can use that to search the file. The stepper motors in Marlin are named – internally only – as X, Y, Z, I, J, K.

#define STRING_CONFIG_H_AUTHOR “(Marginally Clever, Sixi3)”

#define MOTHERBOARD BOARD_RUMBA

#define CUSTOM_MACHINE_NAME “Sixi 3 robot arm”

#define EXTRUDERS 0

Because it’s a RUMBA board I also had to redefine a few of the pin settings. Normally all supported boards are defined in Marlin/src/pins/*.

#define I_STEP_PIN 23

#define I_DIR_PIN 22

#define I_ENABLE_PIN 24

#define J_STEP_PIN 26

#define J_DIR_PIN 25

#define J_ENABLE_PIN 27

#define K_STEP_PIN 29

#define K_DIR_PIN 28

#define K_ENABLE_PIN 39

#undef Y_MAX_PIN

#undef Z_MIN_PIN

#undef Z_MAX_PIN

#define I_MIN_PIN 34

#define J_MIN_PIN 33

#define K_MIN_PIN 32

The type of driver and the external name of each motor is next.

#define X_DRIVER_TYPE A4988

#define Y_DRIVER_TYPE A4988

#define Z_DRIVER_TYPE A4988

//#define X2_DRIVER_TYPE A4988

//#define Y2_DRIVER_TYPE A4988

//#define Z2_DRIVER_TYPE A4988

//#define Z3_DRIVER_TYPE A4988

//#define Z4_DRIVER_TYPE A4988

#define I_DRIVER_TYPE A4988

#define J_DRIVER_TYPE A4988

#define K_DRIVER_TYPE A4988

...

#ifdef I_DRIVER_TYPE

#define AXIS4_NAME 'U' // :['A', 'B', 'C', 'U', 'V', 'W']

#define AXIS4_ROTATES

#endif

#ifdef J_DRIVER_TYPE

#define AXIS5_NAME 'V' // :['B', 'C', 'U', 'V', 'W']

#define AXIS5_ROTATES

#endif

#ifdef K_DRIVER_TYPE

#define AXIS6_NAME 'W' // :['C', 'U', 'V', 'W']

#define AXIS6_ROTATES

#endifLimit switches are tricky because the original Sixi 3 still doesn’t have them. (The plan is a new PCB that has always-on sensors). For Sixi 3 only, I have to trick the sensor code. When the robot turns on it will believe it has already homed, no matter where it is. A better robot with switches would call G28 to find home, and then the invert would depend on the type of switch (normally open vs normally closed) and I don’t remember what plug does.

#define USE_XMIN_PLUG

#define USE_YMIN_PLUG

#define USE_ZMIN_PLUG

#define USE_IMIN_PLUG

#define USE_JMIN_PLUG

#define USE_KMIN_PLUG

...

#define X_MIN_ENDSTOP_INVERTING false

#define Y_MIN_ENDSTOP_INVERTING false

#define Z_MIN_ENDSTOP_INVERTING false

#define I_MIN_ENDSTOP_INVERTING false

#define J_MIN_ENDSTOP_INVERTING false

#define K_MIN_ENDSTOP_INVERTING false

#define X_MAX_ENDSTOP_INVERTING true

#define Y_MAX_ENDSTOP_INVERTING true

#define Z_MAX_ENDSTOP_INVERTING true

#define I_MAX_ENDSTOP_INVERTING false

#define J_MAX_ENDSTOP_INVERTING false

#define K_MAX_ENDSTOP_INVERTING false Motors also need gearbox and speed settings. Sixi 3 has a 70:1 harmonic gearbox and then a further pulley reduction in each unit. Since each motor does 200 steps per turn, that makes 105 steps per degree!

#define DEFAULT_AXIS_STEPS_PER_UNIT { 105, 105, 105, 105, 105, 105 }

105 steps per degree * 5 deg/s = 525 steps per second. Impressive for such tiny NEMA17 motors. It might not be fast but it works and it’s affordable. Cheap, fast, good… pick two.

#define DEFAULT_MAX_FEEDRATE { 5,5,5,5,5,5 }

#define CLASSIC_JERK // uncommented this to turn it on

#define S_CURVE_ACCELERATION // uncommented this to turn it on

I make sure to leave motors on so the arm doesn’t suddenly “go limp” at the worst time.

#define DISABLE_X false

#define DISABLE_Y false

#define DISABLE_Z false

#define DISABLE_I false

#define DISABLE_J false

#define DISABLE_K falseRange of motion is important, Marlin won’t let you go outside the limits. Remember this code was written for square box 3D printers, so some of the terms are a little silly for our needs.

// The size of the printable area

#define X_BED_SIZE 360

#define Y_BED_SIZE 360

// Travel limits (linear=mm, rotational=°) after homing, corresponding to endstop positions.

#define X_MIN_POS 0

#define X_MAX_POS 360

#define Y_MIN_POS 0

#define Y_MAX_POS 360

#define Z_MIN_POS 0

#define Z_MAX_POS 360

#define I_MIN_POS 0

#define I_MAX_POS 360

#define J_MIN_POS 0

#define J_MAX_POS 360

#define K_MIN_POS 0

#define K_MAX_POS 360

#define MIN_SOFTWARE_ENDSTOPS // but no sub-values like MIN_SOFTWARE_ENDSTOP_X

#define MAX_SOFTWARE_ENDSTOPS // but no sub-values like MAX_SOFTWARE_ENDSTOP_X#define EEPROM_SETTINGS // save important data to EEPROM

#define SDSUPPORT

#define REPRAP_DISCOUNT_SMART_CONTROLLER // or your favorite flavor here

#define NUM_SERVOS 1 // for the gripper

Flash your board

Press the Compile button (1) to check for errors. Press the Upload button (2) to send it to your connected device. Press the Connect button (3) to open a serial monitor and check that your device says it is now a Marlin device. If all goes well, you’re ready to rock!

Test your new device

Even before your board is installed in an arm you should be able to home it with G28 and move it with G0/G1. Remember: every bug is just a test you didn’t run!

Final thoughts

Now that you have a 3D printer board setup to run Marlin, it should be able to receive angle values as gcode. Each set is one forward kinematic pose of the arm. Moving between two poses will send the arm in curving arcs. Lots of poses close together will create the look of straight lines. Planning all those poses is easy for apps like Robot Overlord. That’s why I wrote it!

Got more questions? Something out of date in this post? Join us on Discord.