How to translate Makelangelo software

As of Makelangelo Software 7.23.0 it’s now easy for you to translate Makelangelo software into your favorite language. Here’s how!

(more…)As of Makelangelo Software 7.23.0 it’s now easy for you to translate Makelangelo software into your favorite language. Here’s how!

(more…)You’re looking to use your old printer control board to DIY a Makelangelo robot but the firmware does not already support your board. No two boards have quite the same features or pin settings. Ok! Here’s how to make it work for you and everyone after you.

(more…)January 18-30 I will be out of the country for some down time. Jin will still be here working on testing machines and filling orders, but no custom builds of Makelangelo Huge will be happening.

In the development branch of Makelangelo software there’s always lots going on.

Get the Makelangelo 7.22.6 experimental here.

Github user Mishafarms has added raspberry pi camera support: If you run the software on a pi with a camera it can detect your camera and then use that as a source for images to convert. Nicely done!

I’ve put in a TON of work to improve the top speed and reducing stutter. I’m currently drawing at 100mm/s and moving (without drawing) at 400mm/s. So it’s now faster and bigger than an AxiDraw plotter. The motors move so smoothly now that they sound almost musical.

Another nice to have is that the menus are more responsive on the LCD panel and the filenames on SD cards are now full-length instead of 8 characters.

Thanks to the patient feedback of user Joram Neumark I’ve been working to improve support for european users. German computers (or computers with the German Locale) write numbers with a comma instead of a decimal, and the robot does not understand. It doesn’t know it’s in Germany! So instead I’m make sure all numbers written to files are done in the format the robot expects.

Please post to the forums with your experience!

Let’s talk about the Makelangelo Software, how it’s built, and what I’m doing to make it better. I hope we can continue this discussion in the forums.

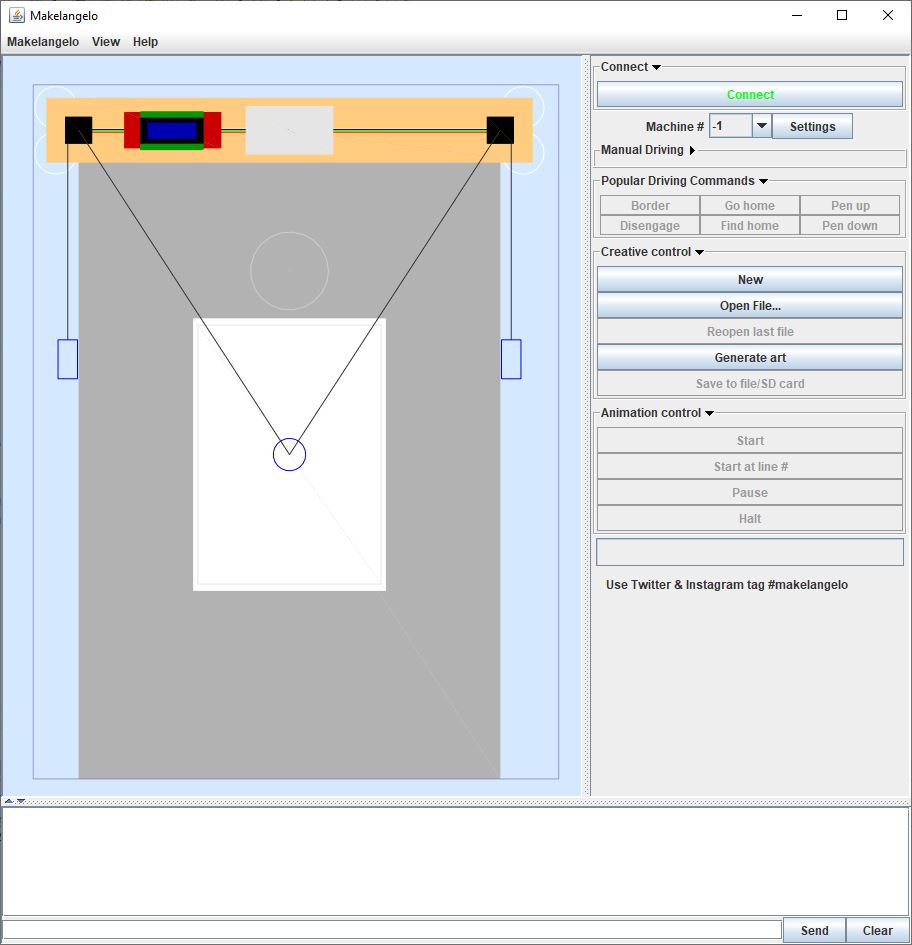

The current version is 7.21.0 at the end of 2019. The top left is a What-you-see-is-what-you-get (WYSIWYG) preview. The top right are the creative controls, the connection to the robot, and the active controls (once connected). The bottom has status messages from the app and the dialog with the connected robot. The very bottom is a field to send commands to the connected robot. The entire bottom section can be (un)hidden with the arrows on the left.

A very special thanks to all of you who have downloaded the software, given great feedback, made pull requests, and those who have donated to help support its development. You give me the energy to keep making it better. Stay awesome!

When I built the first Makelangelo robot it understood gcode, a human-readable text format used by CNC machine like 3D printers and milling machines. I was typing one line of gcode to the robot at a time through the Arduino Serial GUI. Works great; doesn’t scale. I needed a tool to deliver the gcode one line at a time to the robot. Makelangelo software was born.

Later I added a GUI to get a WYSIWYG preview. The preview system would read in the gcode in the file and draw a picture. Every frame, up to 30 times a second.

I had an ugly way to turn a bitmap (like a photo or meme) into gcode. It was too hard! So I added some Converter classes into the app. Each converter would generate gcode, which was then treated as normal by the rest of the system. The app was still reading gcode every frame AND if you changed settings like “pen up angle” then the gcode had to be regenerated by the style system.

I need to segue for a moment here. The Makelangelo is a robot that only draws lines described with gcode. There are two ways in the software to make a set of lines that will get turned into gcode. One is with a Converter classes (which turn pictures into lines). Another is with a Generator class (which makes up lines based on algorithms, aka procedurally). Both of these classes are a type of Manipulator. Pure vector files like DXF and SVG are not really converted, but that’s a discussion for another time. My point is “Manipulator” is a word for both types of classes-that-make-lines.

Recently I’ve been cleaning up the mess with Manipulators. That means instead of writing gcode to a file, each Manipulator controls an instance of a Turtle, just like LOGO on the Commodore 64.

The turtle’s history remembers moves, pen up/down, and color changes. After the Manipulator is done moving the turtle, the turtle’s history is read and interpreted by a final stage that generates the gcode.

This method has many benefits:

Having made this change I now have a new perspective. Instead of seeing the trees – writing to a file, dealing with exceptions, reading it back in, and all the minutia about the contents of the file – now I see the forest called Turtle, and everything that is done to the Turtle before drawing is an art pipeline. Converters and generators both produce a Turtle from some (or zero) other data at the head of the pipeline. The middle steps take a Turtle, change it based on some other data, and then pass it to the next step. The tail of the pipeline saves the Turtle to a format you want.

As a matter of convenience I am changing the drawing process so that all new drawings start with a “find home”. If your machine does not recognize the find home command, nothing changes. If your machine has find home, you don’t have to remember to do it yourself before starting a drawing. This more closely matches the behavior seen in 3D printers. Convenient!

The code can be found at https://github.com/MarginallyClever/Makelangelo-software. It is written in Java. There is a main branch for each release and a dev branch for unreleased maybe-broken current work.

The best way to contribute new code is to make a fork of the dev branch, make your changes, and then make a pull request. If you don’t know what those things are, please see this Github for Beginners tutorial.

If this has inspired you with new ideas or questions, please discuss it in the makelangelo forums.

This is a big interest of mine because of my work on the Makelangelo Software. The software converts a photo to lines by starting with Stippling, where dots are put on the picture in a meaningful way. Everything that flows from there starts with good stippling. Wang Tiles are the current best method, prove me wrong.

I don’t have a way to make wang tiles as described in this video yet. If I had a set I’m confident I could do the rest in short order.